Compiled by Jane Lubenskyi

This Presentation includes an initial overview and following links to working examples in the Siril Software.

First Presented To Montreal RASC Zoom Meeting : Sept 22 2021

A Note about this presentation to Montreal RASC

This will be a 30 minute presentation of the Siril software on pre and post processing of astro images.

…Additionally I have prepared a compilation of my own notes as they apply to YouTube links by other people which I will discuss briefly and make available on my website, Astronomy Notebook https://astronomynotebook.ca/ for your future reference.

…The combination of a verbal run through of the Siril software and my compiled notes and links for further reading will be the most efficient way to get the most information across within the time allotted instead of having people sit through the rituals of pre and post processing.

✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨

SIRIL is an astronomical image processing tool.

Siril works internally with 32-bit floating point Fits images. Users can configure the program to work in unsigned 16-bit FITS format, in order to save computation time and disk space at the expense of image accuracy.

However if the goal is to get the best image possible then stay in 32 bit for as long as possible.

Siril can convert the SER file format (a simple image sequence format, similar to uncompressed movies. Files in this format, widely preferred by astrophotographers in planetary imaging but also can be used in DSO imaging as well. (Sharpcap is a software that can image in Ser format.)

Features include :

-can run on Linux, Windows and Mac

-processing of the images can be via the use of scripts or manually

-can work and save in 32 bit (important to getting a higher dynamic range)

-Supported input types: 8-bit,16-bit, 32 bit BMP, TIFF, JPEG, PNG, FITS and

RAW DSLR images, Ser, AVI video files

-preprocessing image calibration…darks, flats

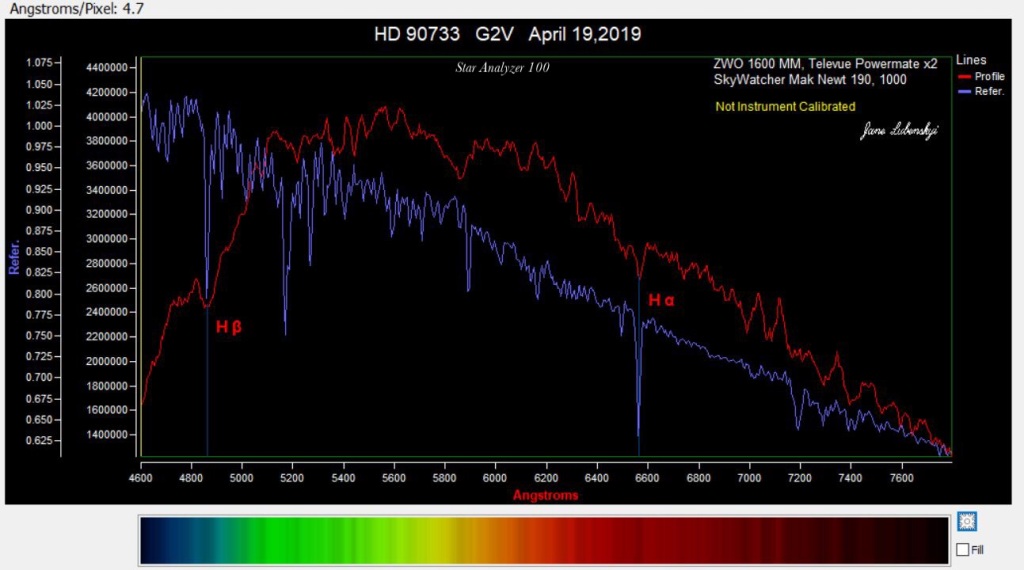

-image registration and alignment in a choice of modes including single

Star which is helpful in Spectroscopy images of stars

-Image stacking, with optional additive or multiplicative normalization,

Modes including Summing, Median, Average with different rejection

methods such as Summing, Median Average with rejection

-Enhancement and processing of final images including:

- Lightness/contrast cursors on each layer, different scaling modes (linear, log, square root, squared, asinh, histogram equalisation), negative and false colour rendering and clipping

- Background removal tool

- Cosmetic correction tool

- Channel extraction and combination

- Photometric and Color Calibration

In addition note what else Siril can do…

Siril has a photometric analysis tool. Therefore, it is possible to study the transits of exoplanets, the variability of stars or even occultations. This tool requires the installation of gnuplot .

………………………

Siril website:

Downloads…choose your platform

Documentation and Video Instruction

This is a good starting point tutorial for Siril image processing…

https://siril.org/tutorials/tuto-scripts/

https://siril.org/tutorials/tuto-scripts/

Tutorial for a complete processing of astronomical images with Siril and its scripts

Other tutorial sources…

Frequently asked questions on Siril…

……………Notes on use of Siril with accompanying Reference UTube………

Using Siril ….An Overview

After download, install and open Siril…

Siril only works directly with Fits files, so it is necessary to load and convert the images first…it saves all conversion and preprocessed, registered and stacked files in a folder which you must specify in the setup at the beginning when opening the software.

Essentially the workflow is from left to right,on the right hand side and top of the Siril screen starting at “Conversion” this pre step is needed in order to change the files into a format that Siril can use. After this we then proceed to preprocessing such as calibration of darks, bias and flats using stacked masters that you have to create beforehand in Siril. Then this is followed by registration, alignment and finally stacking. From this point Siril has many features for image enhancement such as background extraction, cosmetic correction, deconvolution, photometric or regular color calibration, arcsin stretching and histogram transformation to include some of the main enhancement features.

Please note that depending on your skill using Siril and the particular difficulty of the image you may find it easier at some point to continue the image enhancements and tweaks in other software…again try to stay in 32 bit for as long as possible before downsizing…

👉As I side note regarding down sizing, I have found that noise can be significantly reduced in just going from 32 bit to 16 bit… thus not requiring your complete efforts and attention to significantly reduce the noise at the 32 bit level.

So to continue….Save in Siril as 32 bit…

I recommend to save your image so far processed in Siril, (after doing your histrogram stretch to make the image non-linear)

as a 32 bit Tiff file and choose “embed adjustments” upon saving.

From this point on which will not be the topic of this talk,

I use Gimp and Raw Therapee which are both 32 bit working image environments…

I later save to 16 bit Tiff and use a combination of Photoshop CS2 for its use of masking and layers and to help with my stars for reduction of size and color and also use Luminar to bring out more detail using the terrific and versatile brush tool.

………….

Working Examples

Online YouTube Links and my associated notes

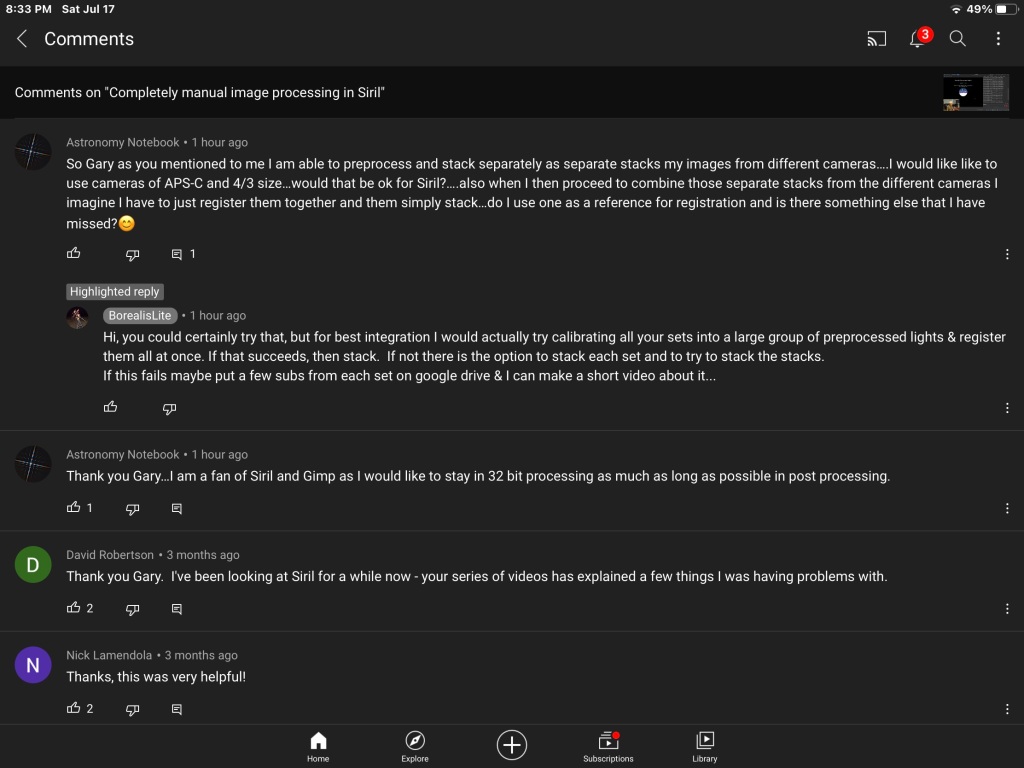

Referring to the video below…

Completely manual image processing in Siril

First convert your files into a format that Siril can use.

As soon as this is done a sequence in Siril is created. Sequences are the basis of the workflow…moving from left to right on the top right hand side of the Siril main window.

(Note click “symbolic link” if you already have fits files.)

If you have calibration frames do not select “debayer” at this point.

For Bias calibration frames a sequence is the created. No need to preprocess or register just choose stacking and Median and no normalization.

To erase files in console window click on bottom right icon.

Do same for Darks.

Do flats…but now if you want to preprocess with Bias choose preprocess and find your master Bias stacked file.

Then after preprocessing do not need to register.

Go to Stack, choose “median” and now use “multiplicative normalization”.

Now clear console, add lights. Name sequence and hit convert.

Note still at this stage in B&W because did not choose debayer in the processing yet.

If do not have darks check offset. And use the master off set…ie the Bias stacked created before.

Check flats and use the master stack flat file.

But if have Darks do not choose Check Offset …that is do not use the master bias file. (Why because your flats have been preprocessed as already done with the bias.)

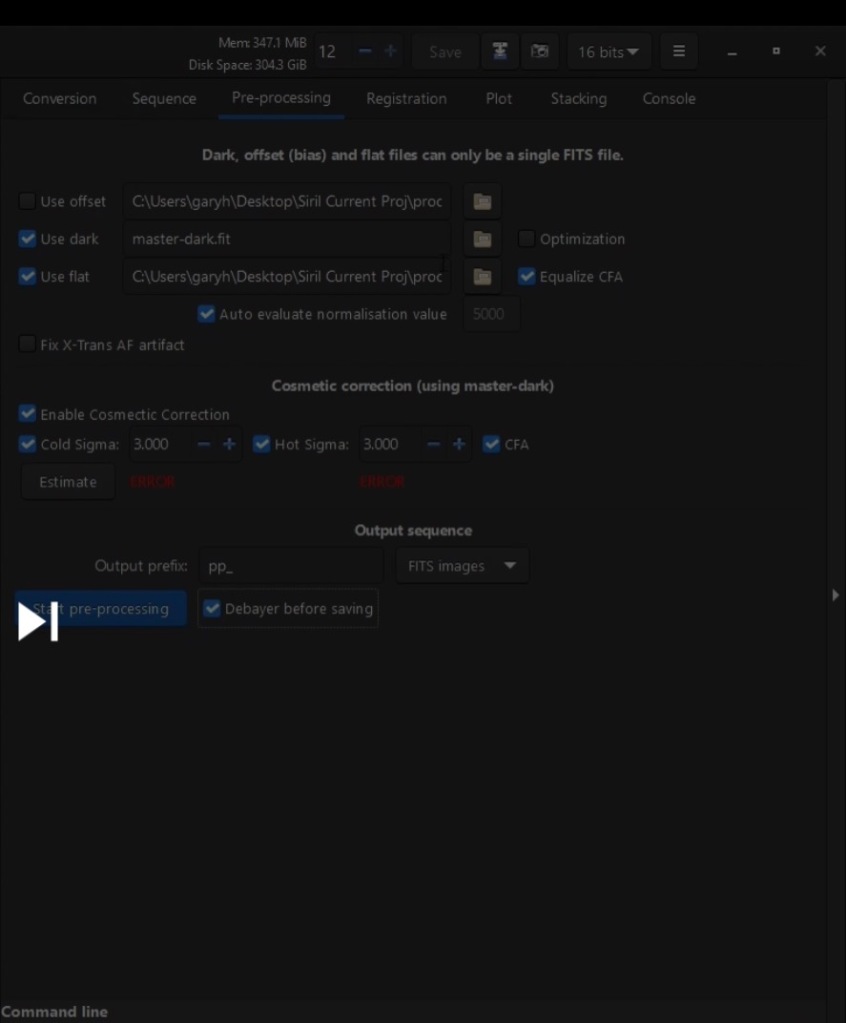

While still in PreProcessing uncheck “optimization” , check “Equalize CFA”, “cosmetic correction”, choose “debayer”… see image below:

Note : If you follow the workflow where you subtract the Darks from the lights as in this video you do not check optimization.

This is what should be checked…

Click on Start PreProcessing.

Now Go to Sequence and you can see your files thus created by choosing “ open frame list”.

Write “close” in the bottom of the console to free up memory at this point.

Now go to Register…choose the pre processed sequence, choose Globle Star Alignment, choose Green as registration layer to use.

The screen should be filled out as in image below…

Plot in the next tab will then give an idea of image quality.

Choose left most icon at bottom of console to open frame list.

Now within the frame list window choose FWHM on the top right of this window to sort the images.

Here you can check quality visually and uncheck image files you do not want to be in the subsequent stack by clicking on the actual plot window.

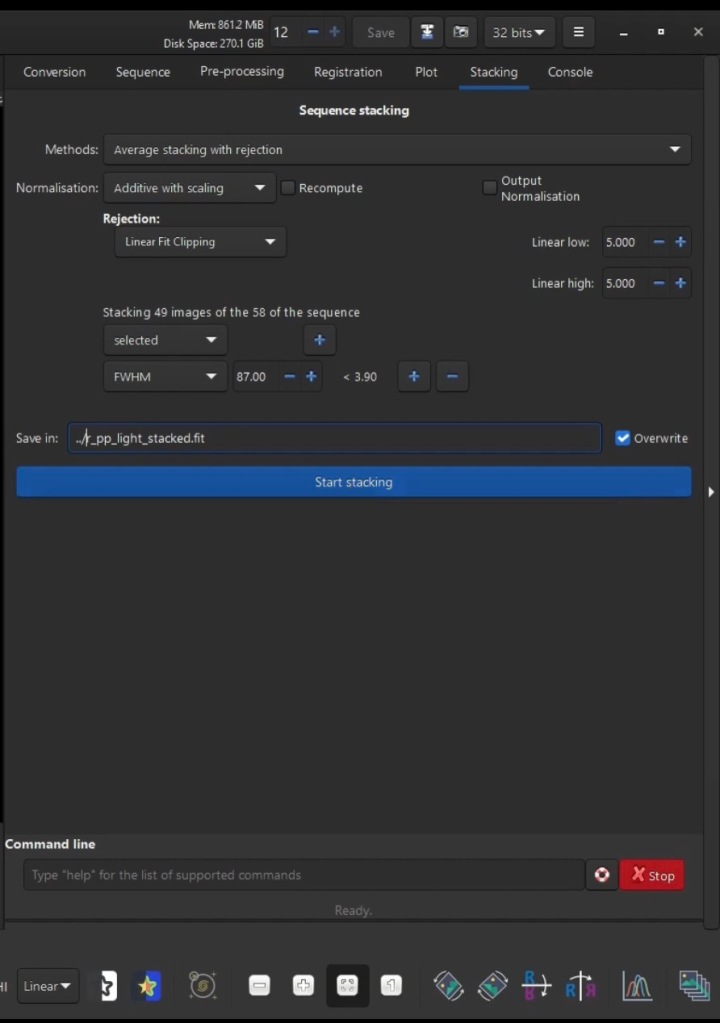

Now Go to Stacking…

Set up the Stacking Window like this…

Choose Linear Fit as more modern as this is what PixInsight uses.

Now a little post processing…

Check image with auto stretch and the histogram modes…

While in the histogram mode…can crop image from stacking artifacts.

👉Note: You really should crop as a first step as the stacking artifacts will interfere in subsequent post processing enhancements.

Go to AutoStretch mode

Do BackGround Extraction for gradient problems.

👉Note: can remove dots with a left click…add with a right click.

Go to the Histogram mode and click on the Do and Redo button to compare the change.

Go to AutoStretch

Can first do a Green Reduction if the noise in green is very predominant.

Do Color Calibration…do the background and the while calibration here.

May need to remove more green noise again at this point.

Can also do after this a Photometric Color Calibration while still in AutoStretch mode.

👉Note: you can run this process again…gets rid of green and fixes background.

👉Note: Since this step involves a plate solve we could label the image…click on 3rd icon at bottom left below console.

👉Note: Can take a snap shot in png file format…click on icon which is 2nd to the right of the save button on top of console.

Next, Cosmetic correction particularly useful to run if have no Dark

frames.

At this point good idea to save as a 32 bit Fit if ever have to com back and do a re-stretch, for instance.

Now start stretching, first change to linear mode.

👉A good idea not to stretch too much in Siril…why because can stretch further in Gimp from the saved 32 bit floating point file ( or from 16 bit saved integer to PS) using masks so as not to bloat the stars.

So for the stretching do an Arcsin which is a Color preserving technique.

Could do say two iterations of this and then do a Histogram stretching…(see short cut icon at bottom right.)

Could do another Arcsin.

Now save by choosing the icon for save ( Not the save button) as a 32 bit floating Tiff if using Gimp or as a 16 bit integer for PS and check embed data.

👉Important Note: You would not choose the printed word “save”

button as this would over write your original linear fit file.

…………….

How To To Run Scripts in Siril

The video below shows where and how to install scripts into Siril.

This video how’s how to work with OSC images using scripts.

Then after scripts run for preprocessing ,registration and stacking, enhancements are further done in this video:

AutoStretch mode

Crop Image (choose maintain aspect ratio)

Choose 2nd white square icon from right “fit to view” to fit to view after crop.

Background Extract …really necessary if you did not calibrate with flat frames.

Photometric Color Calibration

👉Note when choosing focal length for a DSLR it is just what is indicated on the lense not a calculation with the crop factor here.

Change to Linear mode

Choose Arcsin and run twice

Choose Histogram Transformation

👉Don’t clip more than 0.1% of shadows using the left button on the histogram.

Green Reduction

👉Here in this video Green Reduction is recommended to do only after stretching image.

Choose Color Saturation

Can choose Global or individual colour.

Click on do and Redo button on top left to compare before and after.

At this point save as Tiff as 16 bit integer for PS or 32 bit floating point for Gimp.

👉Note: This video near the end when the fellow changes from Siril to PS processing, describes a terrific method that I have not seen quite like this before used elsewhere,on how to enhance a DSO using “High Pass Filter” but at the SAME TIME how NOT to affect the stars.

👉Note: A frequent problem is the chromatic aberration of stars which can be fixed as this video demonstrates

UPDATED Astrophotography Image Processing – Easiest and Best Method for 2021

………

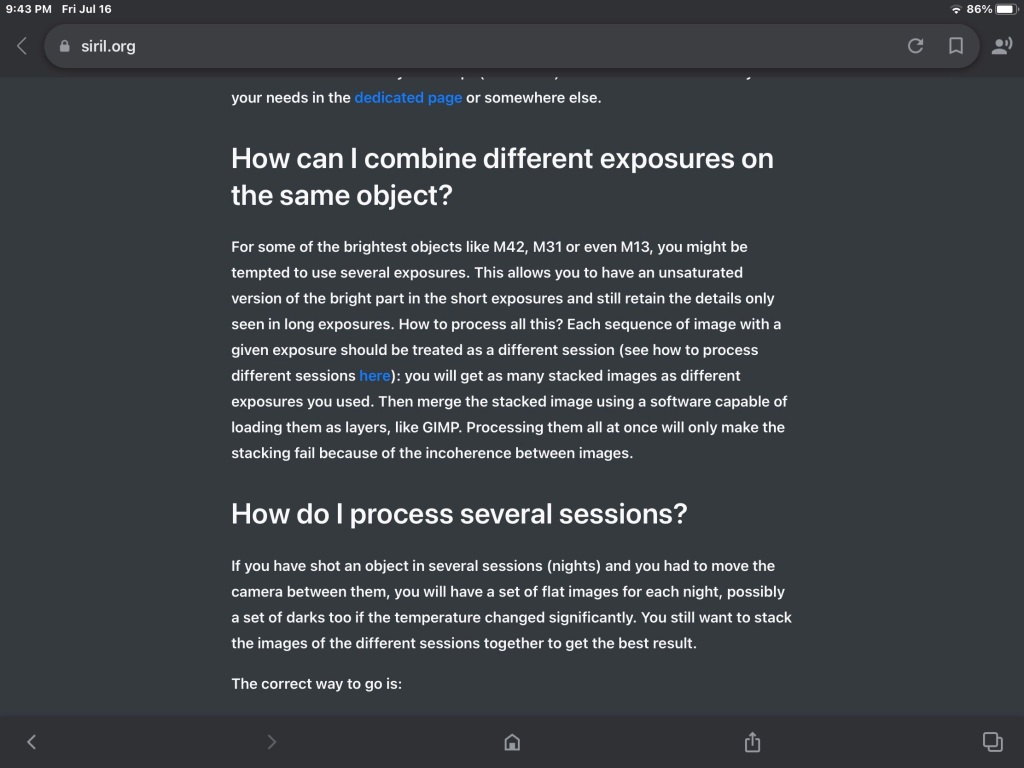

👉How to setup Siril for preprocessing and processing images that have different exposure levels…

👉 So to my understanding, according to the tutorial below, multiple sets of images taken from different night and hopefully can also apply to different cameras and be combined by first preprocess each camera or exposure level… and continuing the index numbering by setting that in Siril, where the previous set left off…that way will take it as a single sequence….then register and stack as usual.

Combining sequences in Siril – Wolf Creek Observatory

……………..

👉Dual band channel extraction

This tutorial is useful for those who have an OSC colour image created using a duo band filter. Frequently one would want to augment the usually weaker Oiii detail and really the best way is to first extract the HA and Oiii as two different images, work on them separately and to recombine which can be done in Siril.

Note: This process done in Scripts requires that in your Working Siril directory you make subdirectories of Biases, Flats, Darks and Lights or the script will not work. For other scripts in Siril pay attention what preliminary requirements are need for the script to b implemented successfully.

Duo narrowband OSC processing using Siril and some Photoshop

………………………………

For my additional notes on image processing (Gimp, PS, Raw Therapee):

MY Ongoing Image Processing Notes Part 1

My compilation of the best tutorials with my own associated notes that addresses how to accomplish further enhancements such as star color, star reduction, noise reduction, improve detail in nebula, reduce bloating in centre of galaxies much more.

Clears Skies,

StarJane 😊

✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨✨